Jessica is a leading media industry figure and an expert in the field of disinformation, currently working as a consultant to media and tech companies. She founded the Trusted News Initiative (TNI), the world’s only alliance of major international tech companies and news organisations (Meta, Google, Twitter and Microsoft included) to counter the most harmful disinformation in real time.

We had the pleasure to met her at 5th Polaris Leadership Summit where she hosted a ‘Winning the fight against disinformation’ panel.

As the founder of TNI could you explain how the alliance effectively collaborates to counter the most harmful disinformation in real time?

It’s crucial to emphasize that the TNI is not a comprehensive solution to the problem of disinformation. Back in 2019, when India was holding elections, the BBC encountered a significant amount of disinformation. Fake polls falsely attributed to the BBC were circulating, misleading the public. We realized that we were helpless in countering this disinformation.

That’s when we made a pivotal decision. We wanted to establish a mechanism that could address dangerous disinformation that had the potential to sway voters’ decisions during elections, especially when it directly endangered lives. We urgently needed a means to communicate and collaborate effectively.

The outcome was the birth of the TNI in 2019. This platform brings together tech platforms and news organizations to collectively define the types of disinformation deemed most dangerous. We reached a clear agreement that any disinformation posing an immediate threat to life or the integrity of the electoral system would be the focus of our cooperation.

Additionally, we established a fast alert system, ensuring that we promptly notify one another about such disinformation. It’s important to note that the Trusted News Initiative doesn’t dictate what each member organization should or shouldn’t publish. Instead, it’s about sharing vital information and being informed.

Here are a couple of examples:

During the Covid crisis, we uncovered attempts to spread disinformation on social platforms, targeting the 5G infrastructure. These false claims not only linked Covid to 5G but also aimed to harm the 5G infrastructure being used for emergency services, which were critical for saving lives. We considered this disinformation a matter of great significance, demanding heightened awareness and vigilance.

Another example is the issue of imposter content, where bad actors misuse the logos of trusted news organizations on social media. They publish fabricated content that appears to be from reputable sources, such as a tweet falsely reporting the death of then-British Prime Minister Boris Johnson, adorned with BBC branding. At first glance, it seemed absolutely believable, and it could have caused chaos if widely spread. However, one of our partners in the TNI alerted us to this fake tweet. We immediately shared the information within the network, which included Twitter itself. Consequently, the tweet was taken down quickly. Even if a television station in Pakistan briefly rebroadcasted it, the dissemination was limited, thanks to the prompt action.

These examples demonstrate the tremendous potential for cooperative real-time information sharing. The Trusted News Initiative does not aim to dictate the actions of organizations or replace regulation, which is vital. Instead, it serves as an additional tool in our arsenal to combat disinformation effectively.

The fight against disinformation often requires striking a balance between countering false narratives and upholding principles of free speech. How does the Trusted News Initiative navigate this delicate balance?

Again, it’s crucial to highlight that many members of the TNI strongly advocate for free speech. When we talk about the prominent news organizations involved, such as the BBC and Reuters, they hold free speech as a fundamental value. Though it’s important to note that there is a high threshold for concern within the initiative. The examples I mentioned earlier, where disinformation poses an immediate threat to life or the integrity of the electoral system, are instances where action is necessary. Particularly, when imposters attempt to exploit the branding of a specific news organization, it becomes imperative to address the issue.

In these cases, it’s not a matter of unduly restricting free speech. It’s about safeguarding the well-being of individuals and preserving the integrity of vital systems. Upholding free speech is undoubtedly essential, but it’s essential to recognize that there have always been and will always be limits to free speech when lives are at stake. As the old saying goes, ‘It’s not free speech to shout “fire” in a crowded theatre.’

What role should media and tech companies play in addressing the spread of disinformation? And what steps can be taken to foster stronger collaboration and coordination among these stakeholders in the battle against disinformation?

I believe there are multiple perspectives to consider when addressing the issue of disinformation.

Regulation is undeniably crucial, but it does have its limitations. One of the drawbacks is its inherent slowness. By the time regulations are implemented, disinformation trends may have evolved, rendering them less effective. Additionally, regulations are bound by the specific countries in which social platforms operate, which can create challenges in enforcing consistent standards.

That’s why I believe there should be a complementary approach of cooperative self-regulation. This approach can offer agility and responsiveness, as it allows for quicker analysis of emerging trends and threats. Importantly, it can transcend national borders, recognizing that disinformation can rapidly spread across and between countries on social platforms.

When it comes to social media, self-regulation may pose certain difficulties. The nature of social media makes it challenging to exercise complete control at all times. Imposing strict regulations on social media platforms may not always be feasible or effective. It becomes crucial to explore alternative strategies that strike a balance between self-regulation and external regulations in this dynamic landscape.

Looking ahead, what do you see as the most pressing challenges in the fight against disinformation, and how can organizations like TNI adapt and innovate to stay ahead of evolving tactics? Are there any emerging technologies or strategies that hold promise in countering disinformation?

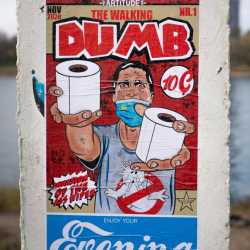

I think that generative AI poses a whole new level of challenge and complexity when it comes to disinformation. In the pre-generative AI era, one could argue that a reasonably well-informed individual could spot disinformation to some extent. However, the very plausibility that generative AI brings, whether it’s text generated from search queries or complete audio, video, and images that appear entirely genuine, makes it harder to discern what is fake. Media education remains incredibly important, but it cannot be solely relied upon as a silver bullet because these things are becoming harder to distinguish.

While I cannot speak on behalf of the TNI anymore, I strongly believe that cooperation between tech companies, AI companies, tech platforms, and news organizations is fundamental in this new environment. They need to work together to develop ways to detect and identify fake content stemming from generative AI. Close collaboration and timely warning systems among these entities become even more vital in combatting what they define as dangerous disinformation.

The idea that one can rely on the average well-educated person to spot fakes is no longer applicable. We should explore leveraging tools like ChatGPT or AI intelligence to create effective means of fighting disinformation. Regarding specific instances of AI-generated disinformation, while we have seen its potential, we have yet to witness its full application due to its novelty.

Looking ahead, with major elections taking place next year in the UK and the US, it becomes increasingly important to harness the power of AI tools to quickly understand and alert all participants to the most potentially harmful disinformation.

It’s important to recognize that having a single arbiter to determine what is and isn’t disinformation is not a realistic approach. We can strive to establish loose alliances and prioritize certain aspects such as threats to life, disinformation concerning climate issues, and other crucial areas. Although there will always be challenging edge cases, when it truly matters, it is essential to foster cooperation and collaboration.

I hope that the principles of the TNI are embraced elsewhere, serving as a valuable resource during times of significant jeopardy in other regions. It is vital to understand that while it may not provide a comprehensive solution to disinformation everywhere, it can be a powerful tool in specific contexts.

Featured image: Produtora Midtrack / Pexels