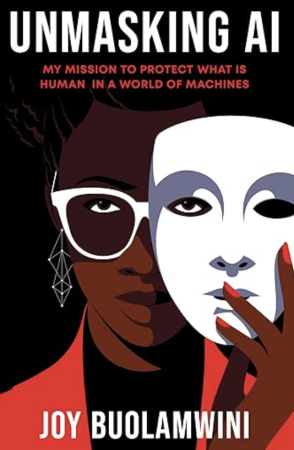

If I had to choose one book I’d recommend to anyone who is interested in the future of AI, it would be Unmasking AI by Joy Buolamwini. Coincidentally, I had been lucky enough to read an advance copy ahead of the AI Summit earlier this month. The long-awaited event brought together business people, world leaders, think tanks and NGOs with the common goal of ensuring that we harness the potential of AI for good.

Most conversations around AI this past year have been dominated by one of two extremes

Those who sing the praises of chatbots and hope that they’ll create a world in which we never have to do tedious work again or existential fears of AI eradicating the human race. When it comes to AI, it seems there is no in between. But computer scientist Joy Buolamwini, named this year as one of TIME’s most influential people in AI, debunks this trope in her debut book.

A former scholar at MIT’s Media Lab, her experience of the bias that are deeply ingrained in our tech emerged when doing her first design project at the institution. Creating what she called the Aspire Mirror, the technology would use face-tracking software to register movements of the user’s face and overlay them into an aspirational figure in the reflection. But when the facial recognition software wouldn’t recognise her until she held a white mask over her face, she was confronted face on with what she’d later come to call the coded gaze.

Whistleblowers like Joy Buolamwini are sounding the alarm on the harms that are already emerging from the use of AI. One key area which can reveal to us the dark side of AI as it stands, is its growing use in the workplace. Just this year, the Trade Union Congress formed a taskforce specifically focused on the rising threat of AI on workers’ rights. In the same realm, warehouse workers in Coventry made history with the UK’s first Amazon strike, demanding that the company ditch algorithmic management techniques that leave them overworked and often experiencing ill physical and mental health as a direct consequence.

Ensuring safety in an AI world

Whilst these might not be sexy battles, ensuring that the foundations of our digital world have safeguards in place will prevent those existential crises from happening. Without tackling the drab and slow-moving nature of legislation, we are going to open ourselves up to a future of chaos. As over 100 trade unions and civil society organisations highlighted in their open letter following the summit, a focus on speculative risks in the future over the real-world harms that are already unfolding is holding us back.

Arguably, one of the main questions we ought to ask ourselves when we consider AI is: do we really need this technology? Should AI replace a boss, or can a human do a better job of managing people? Should judges be guided by calculations and predictions, or instead use their human judgement to make life changing decisions about a defendants’ future? Can algorithms be trusted to make appropriate hiring and firing decisions, given that algorithms are trained on data that can be tainted with sexist, racist and ableist prejudices that come from historical inequalities?

These are all questions that we ought to be asking and discussing collaboratively, instead of tech bosses making those decisions on our behalf. AI is not going anywhere, but in its current form, we have a long way to go. Human instinct and our ability to contextualise people’s behaviour is one of our greatest assets, and this is something that artificial intelligence still lacks.

AI isn’t some omnipotent force that we stand at the mercy of, it’s a technology that we have the power to co-create, if the political will exists.

Featured image: cottonbro studios / Unsplash